If you told a WebXR developer two years ago that an AI agent would be able to build a complete VR experience, load it in a browser, take a screenshot to check if it looks right, diagnose a physics bug through the scene graph, fix the code, and reload, all without a human touching the keyboard, they probably would have laughed. That future arrived faster than anyone expected.

AI Agents Can Now Build, Test, and Debug WebXR Apps Without You

Meta's Immersive Web SDK (IWSDK) now ships with a full AI-assisted development pipeline, and Google's Vibe Coding XR (currently being presented at ACM CHI 2026 in Barcelona this week) takes a complementary approach by letting anyone describe a VR experience in plain English and have it generated in seconds. Together, these two toolkits represent the most significant shift in how XR content gets made since WebXR became a real standard.

Meta's IWSDK: AI That Can See Your VR App

The Immersive Web SDK, which Meta open-sourced under the MIT license at Connect 2025, already made WebXR development significantly easier. Built on Three.js with a high-performance Entity Component System, it handles physics (via Havok), hand tracking, grab interactions, locomotion, and spatial audio out of the box. Running npm create @iwsdk@latest spins up a working VR project in under a minute.

The AI-assisted tooling layer takes things much further. When you create a new IWSDK project, it now comes preconfigured with a RAG server that indexes the entire IWSDK codebase (over 3,300 code chunks with semantic search across 8 specialized tools) and an MCP server that exposes 32 tools to any compatible AI agent. That means the agent doesn't just write code blindly. It can query the scene graph, inspect entity states, read console logs, take screenshots of the running experience, and diagnose what went wrong, all through a structured API.

The workflow looks like this: the agent writes code, reloads the page, observes the result via screenshots and console output, queries the ECS state if something seems off, makes corrections, and repeats. Meta demonstrated the power of this approach by rebuilding Project Flowerbed, their immersive VR gardening experience that originally required tens of thousands of lines of custom code, from scratch in just 15 hours using this agentic workflow.

Google's Vibe Coding XR: Describe It, Build It

Google's approach starts from a different place but arrives at a similar conclusion: you shouldn't need deep graphics programming knowledge to create a WebXR experience.

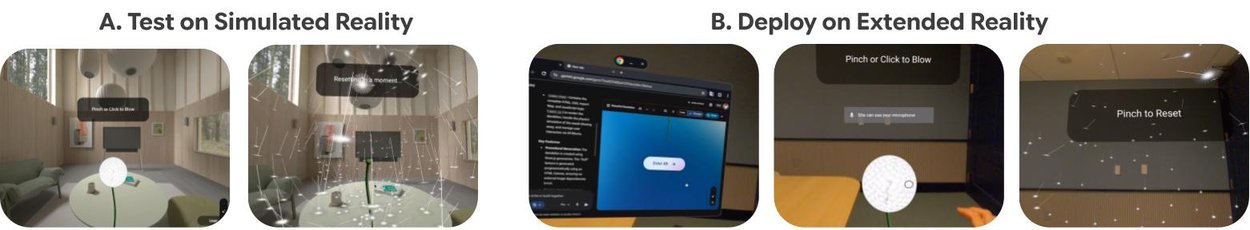

Vibe Coding XR, built on the open-source XR Blocks framework, works as a Gemini Canvas extension. You type a description like "create a physics sandbox with bouncing balls and a seesaw" and the system generates a fully interactive, physics-aware WebXR application. Google reports that using Gemini Pro, the system produces error-free results about 95% of the time.

XR Blocks itself is interesting from an architectural perspective. It introduces what Google calls a "Reality Model," a semantic layer that maps spatial computing primitives (users, physical environments, agents) to natural language concepts. The framework sits on top of WebXR, Three.js, and LiteRT.js, and it's designed from the ground up to be LLM-friendly. That's a deliberate choice: rather than retrofitting AI onto an existing framework, Google built the framework to be readable and generatable by language models.

What This Means for Developers

The practical implications are significant. WebXR already had the advantage of zero-friction distribution (share a link, open in a browser, you're in VR), but the development side still required serious Three.js and graphics programming chops. These AI tools compress the skill gap dramatically.

For experienced developers, Meta's IWSDK approach is the more powerful option. Having an AI agent that can autonomously iterate on a VR experience, inspecting the actual running scene rather than just guessing at code, means faster prototyping and fewer hours spent on tedious debugging cycles. For newcomers, teachers, designers, and anyone who wants to sketch out a spatial idea quickly, Google's prompt-to-VR pipeline is remarkably accessible.

Both frameworks are open source. Both target WebXR. Both run on Quest, Android XR devices, and (via browser support) Vision Pro. And both are available right now.

The WebXR ecosystem just crossed the 1 million monthly active users mark on Meta Quest alone. With AI removing the biggest remaining barrier to creating content for that audience, the next year should be very interesting for anyone building on the immersive web.